Russ Allbery: Review: The Fed Unbound

| Publisher: | Columbia Global Reports |

| Copyright: | 2022 |

| ISBN: | 1-7359137-1-5 |

| Format: | Kindle |

| Pages: | 156 |

| Publisher: | Columbia Global Reports |

| Copyright: | 2022 |

| ISBN: | 1-7359137-1-5 |

| Format: | Kindle |

| Pages: | 156 |

And all of this is because I can read, write, articulate. Perhaps many of them may not even have a voice or a platform.

Even to get this temporary progressive disability certificate there is more than 4 months of running from one place to the other, 4 months of culmination of work. This I can share and tell from my experience, who knows how much else others might have suffered for the same. In my case a review will happen after 5 years, in most other cases they have given only 1 year. Of course, this does justify people s jobs and perhaps partly it may be due to that. Such are times where I really miss that I am unable to hear otherwise could have fleshed out lot more other people s sufferings.

And just so people know/understand this is happening in the heart of the city whose population easily exceeds 6 million plus and is supposed to be a progressive city. I do appreciate and understand the difficulties that the doctors are placed under.

And all of this is because I can read, write, articulate. Perhaps many of them may not even have a voice or a platform.

Even to get this temporary progressive disability certificate there is more than 4 months of running from one place to the other, 4 months of culmination of work. This I can share and tell from my experience, who knows how much else others might have suffered for the same. In my case a review will happen after 5 years, in most other cases they have given only 1 year. Of course, this does justify people s jobs and perhaps partly it may be due to that. Such are times where I really miss that I am unable to hear otherwise could have fleshed out lot more other people s sufferings.

And just so people know/understand this is happening in the heart of the city whose population easily exceeds 6 million plus and is supposed to be a progressive city. I do appreciate and understand the difficulties that the doctors are placed under.

A couple of weeks ago, I read a blog post by former Debian Developer

Lars Wirzenius

offering a free basic (6hr) course on the Rust language to interested

free software and open source software programmers.

I know Lars offers training courses in

programming, and besides knowing him for

~20 years and being proud to consider us to be friends, have worked

with him in a couple of projects (i.e. he is upstream for

vmdb2, which I maintain in

Debian and use for generating

the Raspberry Pi Debian images) He is a

talented programmer, and a fun guy to be around.

A couple of weeks ago, I read a blog post by former Debian Developer

Lars Wirzenius

offering a free basic (6hr) course on the Rust language to interested

free software and open source software programmers.

I know Lars offers training courses in

programming, and besides knowing him for

~20 years and being proud to consider us to be friends, have worked

with him in a couple of projects (i.e. he is upstream for

vmdb2, which I maintain in

Debian and use for generating

the Raspberry Pi Debian images) He is a

talented programmer, and a fun guy to be around.

I was admitted to the first cohort of students of this course (please

note I m not committing him to run free courses ever again! He has

said he would consider doing so, specially to offer a different time

better suited for people in Asia).

I have wanted to learn some Rust for quite some time. About a year

ago, I bought a copy of The Rust Programming

Language, the canonical book

for learning the language, and started reading it But lacked

motivation and lost steam halfway through, and without having done

even a simple real project beyond the simple book exercises.

How has this been? I have enjoyed the course. I must admit I did

expect it to be more hands-on from the beginning, but Rust is such a

large language and it introduces so many new, surprising

concepts. Session two did have two somewhat simple hands-on

challenges; by saying they were somewhat simple does not mean we

didn t have to sweat to get them to compile and work correctly!

I know we will finish this Saturday, and I ll still be a complete

newbie to Rust. I know the only real way to wrap my head around a

language is to actually have a project that uses it And I have

some ideas in mind. However, I don t really feel confident to approach

an already existing project and start meddling with it, trying to

contribute.

What does Rust have that makes it so different? Bufff Variable

ownership (borrow checking) and values lifetimes are the most obvious

salient idea, but they are relatively simple, as you just cannot

forget about them. But understanding (and adopting) idiomatic

constructs such as the pervasive use of enums, understanding that

errors always have to be catered for by using

I was admitted to the first cohort of students of this course (please

note I m not committing him to run free courses ever again! He has

said he would consider doing so, specially to offer a different time

better suited for people in Asia).

I have wanted to learn some Rust for quite some time. About a year

ago, I bought a copy of The Rust Programming

Language, the canonical book

for learning the language, and started reading it But lacked

motivation and lost steam halfway through, and without having done

even a simple real project beyond the simple book exercises.

How has this been? I have enjoyed the course. I must admit I did

expect it to be more hands-on from the beginning, but Rust is such a

large language and it introduces so many new, surprising

concepts. Session two did have two somewhat simple hands-on

challenges; by saying they were somewhat simple does not mean we

didn t have to sweat to get them to compile and work correctly!

I know we will finish this Saturday, and I ll still be a complete

newbie to Rust. I know the only real way to wrap my head around a

language is to actually have a project that uses it And I have

some ideas in mind. However, I don t really feel confident to approach

an already existing project and start meddling with it, trying to

contribute.

What does Rust have that makes it so different? Bufff Variable

ownership (borrow checking) and values lifetimes are the most obvious

salient idea, but they are relatively simple, as you just cannot

forget about them. But understanding (and adopting) idiomatic

constructs such as the pervasive use of enums, understanding that

errors always have to be catered for by using expect() and

Result<T,E> It will take some time to be at ease developing in

it, if I ever reach that stage!

Oh, FWIW Interested related reading. I am halfway through an

interesting article, published in March in the Communications of the

ACM magazine, titled Here We Go Again: Why

Is It Difficult for Developers to Learn Another Programming

Language? ,

that presents an interesting point we don t always consider: If I m a

proficient programmer in the X programming language and want to use

the Y programming language, learning it Should be easier for me

than for the casual bystander, or not? After all, I already have a

background in programming! But it happens that mental constructs we

build for a given language might hamper our learning of a very

different one. This article presents three interesting research

questions:

I have just filed a complaint with the CRTC about my phone provider's outrageous fees. This is a copy of the complaint.I am traveling to Europe, specifically to Ireland, for a 6 days for a work meeting. I thought I could use my phone there. So I looked at my phone provider's services in Europe, and found the "Fido roaming" services: https://www.fido.ca/mobility/roaming The fees, at the time of writing, at fifteen (15!) dollars PER DAY to get access to my regular phone service (not unlimited!!). If I do not use that "roaming" service, the fees are:

I have no illusions about this having any effect. I thought of filing such a complain after the Rogers outage as well, but felt I had less of a standing there because I wasn't affected that much (e.g. I didn't have a life-threatening situation myself). This, however, was ridiculous and frustrating enough to trigger this outrage. We'll see how it goes..."We will respond to you within 10 working days."

Dear Antoine Beaupr : Thank you for contacting us about your mobile telephone international roaming service plan rates concern with Fido Solutions Inc. (Fido). In Canada, mobile telephone service is offered on a competitive basis. Therefore, the Canadian Radio-television and Telecommunications Commission (CRTC) is not involved in Fido's terms of service (including international roaming service plan rates), billing and marketing practices, quality of service issues and customer relations. If you haven't already done so, we encourage you to escalate your concern to a manager if you believe the answer you have received from Fido's customer service is not satisfactory. Based on the information that you have provided, this may also appear to be a Competition Bureau matter. The Competition Bureau is responsible for administering and enforcing the Competition Act, and deals with issues such as false or misleading representations, deceptive marketing practices and collusion. You can reach the Competition Bureau by calling 1-800-348-5358 (toll-free), by TTY (for deaf and hard of hearing people) by calling 1-866-694-8389 (toll-free). For more contact information, please visit http://www.competitionbureau.gc.ca/eic/site/cb-bc.nsf/eng/00157.html When consumers are not satisfied with the service they are offered, we encourage them to compare the products and services of other providers in their area and look for a company that can better match their needs. The following tool helps to show choices of providers in your area: https://crtc.gc.ca/eng/comm/fourprov.htm Thank you for sharing your concern with us.In other words, complain with Fido, or change providers. Don't complain to us, we don't manage the telcos, they self-regulate. Great job, CRTC. This is going great. This is exactly why we're one of the most expensive countries on the planet for cell phone service.

Date: Tue, 13 Sep 2022 10:10:00 -0400 From: Fido DONOTREPLY@fido.ca To: REDACTED Subject: Courriel d avis d itin rance Fido Roaming Welcome Confirmation Fido Date : 13 septembre 2022I found that message utterly confusing (and yes, I can read french). Basically, it says that some user (presumably me!) connected to the network with roaming. I did just disabled airplane mode on my phone to debug a Syncthing bug but had not enabled roaming. So this message seemed to say that I would be charged 15$ (per DAY!) for roaming from now on. Confused, I tried their live chat to try to clarify things, worried I would get charged even more for calling tech support on

Num ro de compte : [redacted] Bonjour

Antoine Beaupr ! Nous vous crivons pour vous indiquer qu au moins un utilisateur inscrit votre compte s est r cemment connect un r seau en itin rance.

Vous trouverez ci-dessous le message texte de bienvenue en itin rance envoy l utilisateur (ou aux utilisateurs), qui contenait les tarifs d itin rance

applicables. Message texte de bienvenue en itin rance Destinataire : REDACTED Date et heure : 2022-09-13 / 10:10:00

Allo, ici Fido : Bienvenue destination! Vous tes inscrit Fido Nomade alors utilisez vos donn es, parlez et textez comme vous le faites la

maison. Depuis le 1 mars 2022 le tarif cette destination pour 15 $/jour (+ taxes) et valide tous les jours jusqu' 23 h 59 HE, peu importe le fuseau

horaire dans lequel vous vous trouvez. Bon voyage! Des questions? Consultez fido.ca/m/itinerance ou composez +15149333436 (sans frais). Besoin d aide?D tails

- PLANIFIEZ UN VOYAGE AVEC Fido NomadeMC

D couvrez nos options d itin rance et restez en contact l tranger sans vous soucier de votre

facture.D tails Ce courriel est produit automatiquement; veuillez ne pas y r pondre. Ce courriel (et toute pi ce jointe) est confidentiel. Si vous n tes pas le destinataire,

- G rez votre compte

G rez vos produits et services Fido la maison ou sur la route gr ce Mon

Compte.

veuillez supprimer le pr sent message et en d truire toute copie. FIDO SOLUTIONS 800, RUE DE LA GAUCHETI RE OUEST

BUREAU 4000 MONTR AL (QU BEC) H5A 1K3

Fido

*611. This is a transcript of the chat:

F: Hi! What are we doing today? Type in your question or choose from the options below: * Track my Equipment Order * View Bill Online * Payment Options * iPhone 14 Pre-Order A: i received a message about roaming while abroad but i did not enable roaming on my phone, will i be charged anyways? F: I think I know what you re asking for. Select the topic that best matches your request or try rephrasing your question. A: no F: Thank you, this will help us to improve! Would you like to chat with a specialist? Chat with a specialist I'll get a specialist to help you with this. It appears that you're not signed in. Your session may have timed out. To save time and identify your account details, please sign in to My Account.[... delay ...]Have any questions specific to your Fido account? To service you faster, please identify yourself by completing the form below. A: Personal info Form submitted F: Thank you! I'll connect you with the next available specialist. Your chat is being transferred to a Live Chat agent. Thanks for your patience. We are here to assist you and we kindly ask that our team members be treated with respect and dignity. Please note that abuse directed towards any Consumer Care Specialist will not be tolerated and will result in the termination of your conversation with us. All of our agents are with other customers at the moment. Your chat is in a priority sequence and someone will be with you as soon as possible. Thanks! Thanks for continuing to hold. An agent will be with you as soon as possible. Thank you for your continued patience. We re getting more Live Chat requests than usual so it s taking longer to answer. Your chat is still in a priority sequence and will be answered as soon as an agent becomes available. Thank you so much for your patience we're sorry for the wait. Your chat is still in a priority sequence and will be answered as soon as possible. Hi, I'm [REDACTED] from Fido in [REDACTED]. May I have your name please? A: hi i am antoine, nice to meet you sorry to use the live chat, but it's not clear to me i can safely use my phone to call support, because i am in ireland and i'm worried i'll get charged for the call F: Thank You Antoine , I see you waited to speak with me today, thank you for your patience.Apart from having to wait, how are you today? A: i am good thank you

- Sign in

- I'm not able to sign in

A: should i restate my question? F: Yes please what is the concern you have? A: i have received an email from fido saying i someone used my phone for roaming it's in french (which is fine), but that's the gist of it i am traveling to ireland for a week i do not want to use fido's services here... i have set the phon eto airplane mode for most of my time here F: The SMS just says what will be the charges if you used any services. A: but today i have mistakenly turned that off and did not turn on roaming well it's not a SMS, it's an email F: Yes take out the sim and keep it safe.Turun off or On for roaming you cant do it as it is part of plan. A: wat F: if you used any service you will be charged if you not used any service you will not be charged. A: you are saying i need to physically take the SIM out of the phone? i guess i will have a fun conversation with your management once i return from this trip not that i can do that now, given that, you know, i nee dto take the sim out of this phone fun times F: Yes that is better as most of the customer end up using some kind of service and get charged for roaming. A: well that is completely outrageous roaming is off on the phone i shouldn't get charged for roaming, since roaming is off on the phone i also don't get why i cannot be clearly told whether i will be charged or not the message i have received says i will be charged if i use the service and you seem to say i could accidentally do that easily can you tell me if i have indeed used service sthat will incur an extra charge? are incoming text messages free? F: I understand but it is on you if you used some data SMS or voice mail you can get charged as you used some services.And we cant check anything for now you have to wait for next bill. and incoming SMS are free rest all service comes under roaming. That is the reason I suggested take out the sim from phone and keep it safe or always keep the phone or airplane mode. A: okay can you confirm whether or not i can call fido by voice for support? i mean for free F: So use your Fido sim and call on +1-514-925-4590 on this number it will be free from out side Canada from Fido sim. A: that is quite counter-intuitive, but i guess i will trust you on that thank you, i think that will be all F: Perfect, Again, my name is [REDACTED] and it s been my pleasure to help you today. Thank you for being a part of the Fido family and have a great day! A: you tooSo, in other words:

*611, and

instead on that long-distance-looking phone number, and yes, that

means turning off airplane mode and putting the SIM card in, which

contradicts step 3+1-514-925-4590) is different than the one provided in the email

(15149333436). So who knows what would have happened if I would have

called the latter. The former is mentioned in their contact page.

I guess the next step is to call Fido over the phone and talk to a

manager, which is what the CRTC told me to do in the first place...

I ended up talking with a manager (another 1h phone call) and they

confirmed there is no other package available at Fido for this. At

best they can provide me with a credit if I mistakenly use the roaming

by accident to refund me, but that's it. The manager also confirmed

that I cannot know if I have actually used any data before reading the

bill, which is issued on the 15th of every month, but only

available... three days later, at which point I'll be back home

anyways.

Fantastic.

CONNECT 2400. Now your computer was bridged to the other; anything going out your serial port was encoded as sound by your modem and decoded at the other end, and vice-versa.

But what, exactly, was the other end?

It might have been another person at their computer. Turn on local echo, and you can see what they did. Maybe you d send files to each other. But in my case, the answer was different: PC Magazine.

71510,1421. CompuServe had forums, and files. Eventually I would use TapCIS to queue up things I wanted to do offline, to minimize phone usage online.

CompuServe eventually added a gateway to the Internet. For the sum of somewhere around $1 a message, you could send or receive an email from someone with an Internet email address! I remember the thrill of one time, as a kid of probably 11 years, sending a message to one of the editors of PC Magazine and getting a kind, if brief, reply back!

But inevitably I had

complete.org, as well. At the time, the process was a bit lengthy and involved downloading a text file form, filling it out in a precise way, sending it to InterNIC, and probably mailing them a check. Well I did that, and in September of 1995, complete.org became mine. I set up sendmail on my local system, as well as INN to handle the limited Usenet newsfeed I requested from the ISP. I even ran Majordomo to host some mailing lists, including some that were surprisingly high-traffic for a few-times-a-day long-distance modem UUCP link!

The modem client programs for FreeBSD were somewhat less advanced than for OS/2, but I believe I wound up using Minicom or Seyon to continue to dial out to BBSs and, I believe, continue to use Learning Link. So all the while I was setting up my local BBS, I continued to have access to the text Internet, consisting of chiefly Gopher for me.

Under Canadian Radio-television and Telecommunications Commission (CRTC) rules in place since 2017, telecom networks are supposed to ensure that cellphones are able to contact 911 even if they do not have service.I could personally confirm that my phone couldn't reach 911 services, because all calls would fail: the problem was that towers were still up, so your phone wouldn't fall back to alternative service providers (which could have resolved the issue). I can only speculate as to why Rogers didn't take cell phone towers out of the network to let phones work properly for 911 service, but it seems like a dangerous game to play. Hilariously, the CRTC itself didn't have a reliable phone service due to the service outage:

Please note that our phone lines are affected by the Rogers network outage. Our website is still available: https://crtc.gc.ca/eng/contact/https://mobile.twitter.com/CRTCeng/status/1545421218534359041 I wonder if they will file a complaint against Rogers themselves about this. I probably should. It seems the federal government is thinking more of the same medicine will fix the problem and has told companies should "help" each other in an emergency. I doubt this will fix anything, and could actually make things worse if the competitors actually interoperate more, as it could cause multi-provider, cascading failures.

He pulls from Ethan Zuckerman s idea of a web that is plural in purpose that just as pool halls, libraries and churches each have different norms, purposes and designs, so too should different places on the internet. To achieve this, Tarnoff wants governments to pass laws that would make the big platforms unprofitable and, in their place, fund small-scale, local experiments in social media design. Instead of having platforms ruled by engagement-maximizing algorithms, Tarnoff imagines public platforms run by local librarians that include content from public media.(Links mine: the Washington Post obviously prefers to not link to the real web, and instead doesn't link to Zuckerman's site all and suggests Amazon for the book, in a cynical example.) And in another example of how the private sector has failed us, there was recently a fluke in the AMBER alert system where the entire province was warned about a loose shooter in Saint-Elz ar except the people in the town, because they have spotty cell phone coverage. In other words, millions of people received a strongly toned, "life-threatening", alert for a city sometimes hours away, except the people most vulnerable to the alert. Not missing a beat, the CAQ party is promising more of the same medicine again and giving more money to telcos to fix the problem, suggesting to spend three billion dollars in private infrastructure.

| Series: | Monk & Robot #2 |

| Publisher: | Tordotcom |

| Copyright: | 2022 |

| ISBN: | 1-250-23624-X |

| Format: | Kindle |

| Pages: | 151 |

| Series: | Eternal Sky #3 |

| Publisher: | Tor |

| Copyright: | April 2014 |

| ISBN: | 0-7653-2756-2 |

| Format: | Hardcover |

| Pages: | 429 |

The Reproducible Builds project relies on several projects, supporters

and sponsors for financial support, but they are

also valued as ambassadors who spread the word about the project and the work

that we do.

This is the third instalment in a series featuring the projects, companies

and individuals who support the Reproducible Builds project. If you are a

supporter of the Reproducible Builds project (of whatever size) and would like

to be featured here, please let get in touch with us at

contact@reproducible-builds.org.

We started this series by

featuring the Civil Infrastructure Platform

project and followed this up with a

post about the Ford Foundation. Today,

however, we ll be talking with Dan Romanchik, Communications Manager at

Amateur Radio Digital Communications (ARDC).

The Reproducible Builds project relies on several projects, supporters

and sponsors for financial support, but they are

also valued as ambassadors who spread the word about the project and the work

that we do.

This is the third instalment in a series featuring the projects, companies

and individuals who support the Reproducible Builds project. If you are a

supporter of the Reproducible Builds project (of whatever size) and would like

to be featured here, please let get in touch with us at

contact@reproducible-builds.org.

We started this series by

featuring the Civil Infrastructure Platform

project and followed this up with a

post about the Ford Foundation. Today,

however, we ll be talking with Dan Romanchik, Communications Manager at

Amateur Radio Digital Communications (ARDC).

Chris: How might you relate the importance of amateur radio and similar

technologies to someone who is non-technical?

Dan: Amateur radio is important in a number of ways. First of all, amateur

radio is a public service. In fact, the legal name for amateur radio is the

Amateur Radio Service, and one of the primary reasons that amateur radio

exists is to provide emergency and public service communications. All over the

world, amateur radio operators are prepared to step up and provide emergency

communications when disaster strikes or to provide communications for events

such as marathons or bicycle tours.

Second, amateur radio is important because it helps advance the state of the

art. By experimenting with different circuits and communications techniques,

amateurs have made significant contributions to communications science and

technology.

Third, amateur radio plays a big part in technical education. It enables

students to experiment with wireless technologies and electronics in ways that

aren t possible without a license. Amateur radio has historically been a

gateway for young people interested in pursuing a career in engineering or

science, such as network or electrical engineering.

Fourth and this point is a little less obvious than the first three amateur

radio is a way to enhance international goodwill and community. Radio knows

no boundaries, of course, and amateurs are therefore ambassadors for their

country, reaching out to all around the world.

Beyond amateur radio, ARDC also supports and promotes research and innovation

in the broader field of digital communication and information and communication

science and technology. Information and communication technology plays a big

part in our lives, be it for business, education, or personal communications.

For example, think of the impact that cell phones have had on our culture. The

challenge is that much of this work is proprietary and owned by large

corporations. By focusing on open source work in this area, we help open the

door to innovation outside of the corporate landscape, which is important to

overall technological resiliency.

Chris: How might you relate the importance of amateur radio and similar

technologies to someone who is non-technical?

Dan: Amateur radio is important in a number of ways. First of all, amateur

radio is a public service. In fact, the legal name for amateur radio is the

Amateur Radio Service, and one of the primary reasons that amateur radio

exists is to provide emergency and public service communications. All over the

world, amateur radio operators are prepared to step up and provide emergency

communications when disaster strikes or to provide communications for events

such as marathons or bicycle tours.

Second, amateur radio is important because it helps advance the state of the

art. By experimenting with different circuits and communications techniques,

amateurs have made significant contributions to communications science and

technology.

Third, amateur radio plays a big part in technical education. It enables

students to experiment with wireless technologies and electronics in ways that

aren t possible without a license. Amateur radio has historically been a

gateway for young people interested in pursuing a career in engineering or

science, such as network or electrical engineering.

Fourth and this point is a little less obvious than the first three amateur

radio is a way to enhance international goodwill and community. Radio knows

no boundaries, of course, and amateurs are therefore ambassadors for their

country, reaching out to all around the world.

Beyond amateur radio, ARDC also supports and promotes research and innovation

in the broader field of digital communication and information and communication

science and technology. Information and communication technology plays a big

part in our lives, be it for business, education, or personal communications.

For example, think of the impact that cell phones have had on our culture. The

challenge is that much of this work is proprietary and owned by large

corporations. By focusing on open source work in this area, we help open the

door to innovation outside of the corporate landscape, which is important to

overall technological resiliency.

Dan: Nearly forty years ago, a group of visionary hams saw the future

possibilities of what was to become the internet and requested an address

allocation from the

Internet Assigned Numbers Authority (IANA). That

allocation included more than sixteen million IPv4 addresses, 44.0.0.0

through 44.255.255.255. These addresses have been used exclusively for

amateur radio applications and experimentation with digital communications

techniques ever since. In 2011, the informal group of hams administering these

addresses incorporated as a nonprofit corporation, Amateur Radio Digital

Communications (ARDC). ARDC is recognized by IANA,

ARIN and the other Internet Registries as the sole

owner of these addresses, which are also known as AMPRNet

or 44Net.

Over the years, ARDC has assigned addresses to thousands of hams on a long-term

loan (essentially acting as a zero-cost lease), allowing them to experiment

with digital communications technology. Using these IP addresses, hams have

carried out some very interesting and worthwhile research projects and

developed practical applications, including TCP/IP connectivity via radio

links, digital voice, telemetry and repeater linking.

Even so, the amateur radio community never used much more than half the

available addresses, and today, less than one third of the address space is

assigned and in use. This is one of the reasons that ARDC, in 2019, decided to

sell one quarter of the address space (or approximately 4 million IP addresses)

and establish an endowment with the proceeds. This endowment now funds ARDC s a

suite of grants, including scholarships, research projects, and of course

amateur radio projects. Initially, ARDC was restricted to awarding grants to

organizations in the United States, but is now able to provide funds to

organizations around the world.

Dan: Nearly forty years ago, a group of visionary hams saw the future

possibilities of what was to become the internet and requested an address

allocation from the

Internet Assigned Numbers Authority (IANA). That

allocation included more than sixteen million IPv4 addresses, 44.0.0.0

through 44.255.255.255. These addresses have been used exclusively for

amateur radio applications and experimentation with digital communications

techniques ever since. In 2011, the informal group of hams administering these

addresses incorporated as a nonprofit corporation, Amateur Radio Digital

Communications (ARDC). ARDC is recognized by IANA,

ARIN and the other Internet Registries as the sole

owner of these addresses, which are also known as AMPRNet

or 44Net.

Over the years, ARDC has assigned addresses to thousands of hams on a long-term

loan (essentially acting as a zero-cost lease), allowing them to experiment

with digital communications technology. Using these IP addresses, hams have

carried out some very interesting and worthwhile research projects and

developed practical applications, including TCP/IP connectivity via radio

links, digital voice, telemetry and repeater linking.

Even so, the amateur radio community never used much more than half the

available addresses, and today, less than one third of the address space is

assigned and in use. This is one of the reasons that ARDC, in 2019, decided to

sell one quarter of the address space (or approximately 4 million IP addresses)

and establish an endowment with the proceeds. This endowment now funds ARDC s a

suite of grants, including scholarships, research projects, and of course

amateur radio projects. Initially, ARDC was restricted to awarding grants to

organizations in the United States, but is now able to provide funds to

organizations around the world.

Dan: We are really proud of our grant to the Hoopa Valley Tribe

in California. With a population of nearly 2,100, their reservation is the

largest in California. Like everywhere else, the COVID-19 pandemic hit the

reservation hard, and the lack of broadband internet access meant that 130

children on the reservation were unable to attend school remotely.

The ARDC grant allowed the tribe to address the immediate broadband needs in

the Hoopa Valley, as well as encourage the use of amateur radio and other

two-way communications on the reservation. The first nation was able to deploy

a network that provides broadband access to approximately 90% of the residents

in the valley. And, in addition to bringing remote education to those 130

children, the Hoopa now use the network for remote medical monitoring and

consultation, adult education, and other applications.

Other successes include our grants to:

Dan: We are really proud of our grant to the Hoopa Valley Tribe

in California. With a population of nearly 2,100, their reservation is the

largest in California. Like everywhere else, the COVID-19 pandemic hit the

reservation hard, and the lack of broadband internet access meant that 130

children on the reservation were unable to attend school remotely.

The ARDC grant allowed the tribe to address the immediate broadband needs in

the Hoopa Valley, as well as encourage the use of amateur radio and other

two-way communications on the reservation. The first nation was able to deploy

a network that provides broadband access to approximately 90% of the residents

in the valley. And, in addition to bringing remote education to those 130

children, the Hoopa now use the network for remote medical monitoring and

consultation, adult education, and other applications.

Other successes include our grants to:

To learn more about ARDC in general, please visit our website at

https://www.ampr.org.

To learn more about 44Net, go to

https://wiki.ampr.org/wiki/Main_Page.

And, finally, to learn more about our grants program, go to

https://www.ampr.org/apply/

To learn more about ARDC in general, please visit our website at

https://www.ampr.org.

To learn more about 44Net, go to

https://wiki.ampr.org/wiki/Main_Page.

And, finally, to learn more about our grants program, go to

https://www.ampr.org/apply/

Welcome to the March 2022 report from the Reproducible Builds project! In our monthly reports we outline the most important things that we have been up to over the past month.

Welcome to the March 2022 report from the Reproducible Builds project! In our monthly reports we outline the most important things that we have been up to over the past month.

The in-toto project was accepted as an incubating project within the Cloud Native Computing Foundation (CNCF). in-toto is a framework that protects the software supply chain by collecting and verifying relevant data. It does so by enabling libraries to collect information about software supply chain actions and then allowing software users and/or project managers to publish policies about software supply chain practices that can be verified before deploying or installing software. CNCF foundations hosts a number of critical components of the global technology infrastructure under the auspices of the Linux Foundation. (View full announcement.)

The in-toto project was accepted as an incubating project within the Cloud Native Computing Foundation (CNCF). in-toto is a framework that protects the software supply chain by collecting and verifying relevant data. It does so by enabling libraries to collect information about software supply chain actions and then allowing software users and/or project managers to publish policies about software supply chain practices that can be verified before deploying or installing software. CNCF foundations hosts a number of critical components of the global technology infrastructure under the auspices of the Linux Foundation. (View full announcement.)

Herv Boutemy posted to our mailing list with an announcement that the Java Reproducible Central has hit the milestone of 500 fully reproduced builds of upstream projects . Indeed, at the time of writing, according to the nightly rebuild results, 530 releases were found to be fully reproducible, with 100% reproducible artifacts.

Herv Boutemy posted to our mailing list with an announcement that the Java Reproducible Central has hit the milestone of 500 fully reproduced builds of upstream projects . Indeed, at the time of writing, according to the nightly rebuild results, 530 releases were found to be fully reproducible, with 100% reproducible artifacts.

GitBOM is relatively new project to enable build tools trace every source file that is incorporated into build artifacts. As an experiment and/or proof-of-concept, the GitBOM developers are rebuilding Debian to generate side-channel build metadata for versions of Debian that have already been released. This only works because Debian is (partial) reproducible, so one can be sure that that, if the case where build artifacts are identical, any metadata generated during these instrumented builds applies to the binaries that were built and released in the past. More information on their approach is available in

GitBOM is relatively new project to enable build tools trace every source file that is incorporated into build artifacts. As an experiment and/or proof-of-concept, the GitBOM developers are rebuilding Debian to generate side-channel build metadata for versions of Debian that have already been released. This only works because Debian is (partial) reproducible, so one can be sure that that, if the case where build artifacts are identical, any metadata generated during these instrumented builds applies to the binaries that were built and released in the past. More information on their approach is available in README file in the bomsh repository.

[ ] implemented what we call package multi-versioning for C/C++ software that lacks function multi-versioning and run-time dispatch [ ]. It is another way to ensure that users do not have to trade reproducibility for performance. (full PDF)

Kit Martin posted to the FOSSA blog a post titled The Three Pillars of Reproducible Builds. Inspired by the shock of infiltrated or intentionally broken NPM packages, supply chain attacks, long-unnoticed backdoors , the post goes on to outline the high-level steps that lead to a reproducible build:

Kit Martin posted to the FOSSA blog a post titled The Three Pillars of Reproducible Builds. Inspired by the shock of infiltrated or intentionally broken NPM packages, supply chain attacks, long-unnoticed backdoors , the post goes on to outline the high-level steps that lead to a reproducible build:

It is one thing to talk about reproducible builds and how they strengthen software supply chain security, but it s quite another to effectively configure a reproducible build. Concrete steps for specific languages are a far larger topic than can be covered in a single blog post, but today we ll be talking about some guiding principles when designing reproducible builds. [ ]The article was discussed on Hacker News.

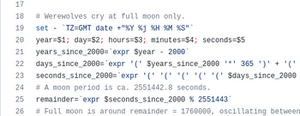

Finally, Bernhard M. Wiedemann noticed that the GNU Helloworld project varies depending on whether it is being built during a full moon! (Reddit announcement, openSUSE bug report)

Finally, Bernhard M. Wiedemann noticed that the GNU Helloworld project varies depending on whether it is being built during a full moon! (Reddit announcement, openSUSE bug report)

There will be an in-person Debian Reunion in Hamburg, Germany later this year, taking place from 23 30 May. Although this is a Debian event, there will be some folks from the broader Reproducible Builds community and, of course, everyone is welcome. Please see the event page on the Debian wiki for more information.

Bernhard M. Wiedemann posted to our mailing list about a meetup for Reproducible Builds folks at the openSUSE conference in Nuremberg, Germany.

There will be an in-person Debian Reunion in Hamburg, Germany later this year, taking place from 23 30 May. Although this is a Debian event, there will be some folks from the broader Reproducible Builds community and, of course, everyone is welcome. Please see the event page on the Debian wiki for more information.

Bernhard M. Wiedemann posted to our mailing list about a meetup for Reproducible Builds folks at the openSUSE conference in Nuremberg, Germany.

It was also recently announced that DebConf22 will take place this year as an in-person conference in Prizren, Kosovo. The pre-conference meeting (or Debcamp ) will take place from 10 16 July, and the main talks, workshops, etc. will take place from 17 24 July.

It was also recently announced that DebConf22 will take place this year as an in-person conference in Prizren, Kosovo. The pre-conference meeting (or Debcamp ) will take place from 10 16 July, and the main talks, workshops, etc. will take place from 17 24 July.

SOURCE_DATE_EPOCH environment variable, both by expanding parts of the existing text [ ][ ] as well as clarifying meaning by removing text in other places [ ]. In addition, Chris Lamb added a Twitter Card to our website s metadata too [ ][ ][ ].

On our mailing list this month:

So now we have 364 source packages for which we have a patch and for which we can show that this patch does not change the build output. Do you agree that with those two properties, the advantages of the 3.0 (quilt) format are sufficient such that the change shall be implemented at least for those 364? [ ]

buildpath_in_postgres_opcodes, captures_kernel_version_via_CMAKE_SYSTEM, build_id_differences_only, etc.

python-securesystemslib package to version 0.22.0-1 and the in-toto package to 1.2.0-1.

In openSUSE, Bernhard M. Wiedemann posted his usual monthly reproducible builds status report.

In openSUSE, Bernhard M. Wiedemann posted his usual monthly reproducible builds status report.

diffoscope is our in-depth and content-aware diff utility. Not only can it locate and diagnose reproducibility issues, it can provide human-readable diffs from many kinds of binary formats. This month, Chris Lamb prepared and uploaded versions

diffoscope is our in-depth and content-aware diff utility. Not only can it locate and diagnose reproducibility issues, it can provide human-readable diffs from many kinds of binary formats. This month, Chris Lamb prepared and uploaded versions 207, 208 and 209 to Debian unstable, as well as made the following changes to the code itself:

--append-build-command option [ ], which was subsequently uploaded to Debian unstable by Holger Levsen.

python-ara.nbformat.chemical-structures.fiat.intel-mediasdk.libao.pcp.tevent.pcp.apr-util.liggghts.kristall.lmod.libranlip.xrt.btrfsmaintenance. The Reproducible Builds project runs a significant testing framework at tests.reproducible-builds.org, to check packages and other artifacts for reproducibility. This month, the following changes were made:

The Reproducible Builds project runs a significant testing framework at tests.reproducible-builds.org, to check packages and other artifacts for reproducibility. This month, the following changes were made:

dsa-check-running-kernel script with a packaged version. [ ]sources.lst file for our mail server as its still running Debian buster. [ ]debsecan package everywhere; it got installed accidentally via the Recommends relation. [ ]#reproducible-builds on irc.oftc.net.

rb-general@lists.reproducible-builds.org

As many of you may be aware, I work with Lars Wirzenius on a project

we call Subplot which is a tool for writing documentation which helps

all stakeholders involved with a proejct to understand how the project meets

its requirements.

At the start of February we had FOSDEM which was once again online, and

I decided to give a talk in the Safety and open source devroom to

introduce the concepts of safety argumentation and to bring some attention

to how I feel that Subplot could be used in that arena. You can view the

talk on the FOSDEM website at some point in the future when they

manage to finish transcoding all the amazing talks from the weekend, or if

you are more impatient, on Youtube, whichever you prefer.

If, after watching the talk, or indeed just reading about Subplot on our website,

you are interested in learning more about Subplot, or talking with us about

how it might fit into your development flow, then you can find Lars and

myself in the Subplot Matrix Room or else on any number of IRC networks

where I hang around as

As many of you may be aware, I work with Lars Wirzenius on a project

we call Subplot which is a tool for writing documentation which helps

all stakeholders involved with a proejct to understand how the project meets

its requirements.

At the start of February we had FOSDEM which was once again online, and

I decided to give a talk in the Safety and open source devroom to

introduce the concepts of safety argumentation and to bring some attention

to how I feel that Subplot could be used in that arena. You can view the

talk on the FOSDEM website at some point in the future when they

manage to finish transcoding all the amazing talks from the weekend, or if

you are more impatient, on Youtube, whichever you prefer.

If, after watching the talk, or indeed just reading about Subplot on our website,

you are interested in learning more about Subplot, or talking with us about

how it might fit into your development flow, then you can find Lars and

myself in the Subplot Matrix Room or else on any number of IRC networks

where I hang around as kinnison.

In my four most recent posts, I went over the memoirs and biographies, the non-fiction, the fiction and the 'classic' novels that I enjoyed reading the most in 2021. But in the very last of my 2021 roundup posts, I'll be going over some of my favourite movies. (Saying that, these are perhaps less of my 'favourite films' than the ones worth remarking on after all, nobody needs to hear that The Godfather is a good movie.)

It's probably helpful to remark you that I took a self-directed course in film history in 2021, based around the first volume of Roger Ebert's The Great Movies. This collection of 100-odd movie essays aims to make a tour of the landmarks of the first century of cinema, and I watched all but a handul before the year was out. I am slowly making my way through volume two in 2022. This tome was tremendously useful, and not simply due to the background context that Ebert added to each film: it also brought me into contact with films I would have hardly come through some other means. Would I have ever discovered the sly comedy of Trouble in Paradise (1932) or the touching proto-realism of L'Atalante (1934) any other way? It also helped me to 'get around' to watching films I may have put off watching forever the influential Battleship Potemkin (1925), for instance, and the ur-epic Lawrence of Arabia (1962) spring to mind here.

Choosing a 'worst' film is perhaps more difficult than choosing the best. There are first those that left me completely dry (Ready or Not, Written on the Wind, etc.), and those that were simply poorly executed. And there are those that failed to meet their own high opinions of themselves, such as the 'made for Reddit' Tenet (2020) or the inscrutable Vanilla Sky (2001) the latter being an almost perfect example of late-20th century cultural exhaustion.

But I must save my most severe judgement for those films where I took a visceral dislike how their subjects were portrayed. The sexually problematic Sixteen Candles (1984) and the pseudo-Catholic vigilantism of The Boondock Saints (1999) both spring to mind here, the latter of which combines so many things I dislike into such a short running time I'd need an entire essay to adequately express how much I disliked it.

In my four most recent posts, I went over the memoirs and biographies, the non-fiction, the fiction and the 'classic' novels that I enjoyed reading the most in 2021. But in the very last of my 2021 roundup posts, I'll be going over some of my favourite movies. (Saying that, these are perhaps less of my 'favourite films' than the ones worth remarking on after all, nobody needs to hear that The Godfather is a good movie.)

It's probably helpful to remark you that I took a self-directed course in film history in 2021, based around the first volume of Roger Ebert's The Great Movies. This collection of 100-odd movie essays aims to make a tour of the landmarks of the first century of cinema, and I watched all but a handul before the year was out. I am slowly making my way through volume two in 2022. This tome was tremendously useful, and not simply due to the background context that Ebert added to each film: it also brought me into contact with films I would have hardly come through some other means. Would I have ever discovered the sly comedy of Trouble in Paradise (1932) or the touching proto-realism of L'Atalante (1934) any other way? It also helped me to 'get around' to watching films I may have put off watching forever the influential Battleship Potemkin (1925), for instance, and the ur-epic Lawrence of Arabia (1962) spring to mind here.

Choosing a 'worst' film is perhaps more difficult than choosing the best. There are first those that left me completely dry (Ready or Not, Written on the Wind, etc.), and those that were simply poorly executed. And there are those that failed to meet their own high opinions of themselves, such as the 'made for Reddit' Tenet (2020) or the inscrutable Vanilla Sky (2001) the latter being an almost perfect example of late-20th century cultural exhaustion.

But I must save my most severe judgement for those films where I took a visceral dislike how their subjects were portrayed. The sexually problematic Sixteen Candles (1984) and the pseudo-Catholic vigilantism of The Boondock Saints (1999) both spring to mind here, the latter of which combines so many things I dislike into such a short running time I'd need an entire essay to adequately express how much I disliked it.

Dogtooth (2009) A father, a mother, a brother and two sisters live in a large and affluent house behind a very high wall and an always-locked gate. Only the father ever leaves the property, driving to the factory that he happens to own. Dogtooth goes far beyond any allusion to Josef Fritzl's cellar, though, as the children's education is a grotesque parody of home-schooling. Here, the parents deliberately teach their children the wrong meaning of words (e.g. a yellow flower is called a 'zombie'), all of which renders the outside world utterly meaningless and unreadable, and completely mystifying its very existence. It is this creepy strangeness within a 'regular' family unit in Dogtooth that is both socially and epistemically horrific, and I'll say nothing here of its sexual elements as well. Despite its cold, inscrutable and deadpan surreality, Dogtooth invites all manner of potential interpretations. Is this film about the artificiality of the nuclear family that the West insists is the benchmark of normality? Or is it, as I prefer to believe, something more visceral altogether: an allegory for the various forms of ontological violence wrought by fascism, as well a sobering nod towards some of fascism's inherent appeals? (Perhaps it is both. In 1972, French poststructuralists Gilles and F lix Guattari wrote Anti-Oedipus, which plays with the idea of the family unit as a metaphor for the authoritarian state.) The Greek-language Dogtooth, elegantly shot, thankfully provides no easy answers.

Holy Motors (2012) There is an infamous scene in Un Chien Andalou, the 1929 film collaboration between Luis Bu uel and famed artist Salvador Dal . A young woman is cornered in her own apartment by a threatening man, and she reaches for a tennis racquet in self-defence. But the man suddenly picks up two nearby ropes and drags into the frame two large grand pianos... each leaden with a dead donkey, a stone tablet, a pumpkin and a bewildered priest. This bizarre sketch serves as a better introduction to Leos Carax's Holy Motors than any elementary outline of its plot, which ostensibly follows 24 hours in the life of a man who must play a number of extremely diverse roles around Paris... all for no apparent reason. (And is he even a man?) Surrealism as an art movement gets a pretty bad wrap these days, and perhaps justifiably so. But Holy Motors and Un Chien Andalou serve as a good reminder that surrealism can be, well, 'good, actually'. And if not quite high art, Holy Motors at least demonstrates that surrealism can still unnerving and hilariously funny. Indeed, recalling the whimsy of the plot to a close friend, the tears of laughter came unbidden to my eyes once again. ("And then the limousines...!") Still, it is unclear how Holy Motors truly refreshes surrealism for the twenty-first century. Surrealism was, in part, a reaction to the mechanical and unfeeling brutality of World War I and ultimately sought to release the creative potential of the unconscious mind. Holy Motors cannot be responding to another continental conflagration, and so it appears to me to be some kind of commentary on the roles we exhibit in an era of 'post-postmodernity': a sketch on our age of performative authenticity, perhaps, or an idle doodle on the function and psychosocial function of work. Or perhaps not. After all, this film was produced in a time that offers the near-universal availability of mind-altering substances, and this certainly changes the context in which this film was both created. And, how can I put it, was intended to be watched.

Manchester by the Sea (2016) An absolutely devastating portrayal of a character who is unable to forgive himself and is hesitant to engage with anyone ever again. It features a near-ideal balance between portraying unrecoverable anguish and tender warmth, and is paradoxically grandiose in its subtle intimacy. The mechanics of life led me to watch this lying on a bed in a chain hotel by Heathrow Airport, and if this colourless circumstance blunted the film's emotional impact on me, I am probably thankful for it. Indeed, I find myself reduced in this review to fatuously recalling my favourite interactions instead of providing any real commentary. You could write a whole essay about one particular incident: its surfaces, subtexts and angles... all despite nothing of any substance ever being communicated. Truly stunning.

McCabe & Mrs. Miller (1971) Roger Ebert called this movie one of the saddest films I have ever seen, filled with a yearning for love and home that will not ever come. But whilst it is difficult to disagree with his sentiment, Ebert's choice of sad is somehow not quite the right word. Indeed, I've long regretted that our dictionaries don't have more nuanced blends of tragedy and sadness; perhaps the Ancient Greeks can loan us some. Nevertheless, the plot of this film is of a gambler and a prostitute who become business partners in a new and remote mining town called Presbyterian Church. However, as their town and enterprise booms, it comes to the attention of a large mining corporation who want to bully or buy their way into the action. What makes this film stand out is not the plot itself, however, but its mood and tone the town and its inhabitants seem to be thrown together out of raw lumber, covered alternatively in mud or frozen ice, and their days (and their personalities) are both short and dark in equal measure. As a brief aside, if you haven't seen a Roger Altman film before, this has all the trappings of being a good introduction. As Ebert went on to observe: This is not the kind of movie where the characters are introduced. They are all already here. Furthermore, we can see some of Altman's trademark conversations that overlap, a superb handling of ensemble casts, and a quietly subversive view of the tyranny of 'genre'... and the latter in a time when the appetite for revisionist portrays of the West was not very strong. All of these 'Altmanian' trademarks can be ordered in much stronger measures in his later films: in particular, his comedy-drama Nashville (1975) has 24 main characters, and my jejune interpretation of Gosford Park (2001) is that it is purposefully designed to poke fun those who take a reductionist view of 'genre', or at least on the audience's expectations. (In this case, an Edwardian-era English murder mystery in the style of Agatha Christie, but where no real murder or detection really takes place.) On the other hand, McCabe & Mrs. Miller is actually a poor introduction to Altman. The story is told in a suitable deliberate and slow tempo, and the two stars of the film are shown thoroughly defrocked of any 'star status', in both the visual and moral dimensions. All of these traits are, however, this film's strength, adding up to a credible, fascinating and riveting portrayal of the old West.

Detour (1945) Detour was filmed in less than a week, and it's difficult to decide out of the actors and the screenplay which is its weakest point.... Yet it still somehow seemed to drag me in. The plot revolves around luckless Al who is hitchhiking to California. Al gets a lift from a man called Haskell who quickly falls down dead from a heart attack. Al quickly buries the body and takes Haskell's money, car and identification, believing that the police will believe Al murdered him. An unstable element is soon introduced in the guise of Vera, who, through a set of coincidences that stretches credulity, knows that this 'new' Haskell (ie. Al pretending to be him) is not who he seems. Vera then attaches herself to Al in order to blackmail him, and the world starts to spin out of his control. It must be understood that none of this is executed very well. Rather, what makes Detour so interesting to watch is that its 'errors' lend a distinctively creepy and unnatural hue to the film. Indeed, in the early twentieth century, Sigmund Freud used the word unheimlich to describe the experience of something that is not simply mysterious, but something creepy in a strangely familiar way. This is almost the perfect description of watching Detour its eerie nature means that we are not only frequently second-guessed about where the film is going, but are often uncertain whether we are watching the usual objective perspective offered by cinema. In particular, are all the ham-fisted segues, stilted dialogue and inscrutable character motivations actually a product of Al inventing a story for the viewer? Did he murder Haskell after all, despite the film 'showing' us that Haskell died of natural causes? In other words, are we watching what Al wants us to believe? Regardless of the answers to these questions, the film succeeds precisely because of its accidental or inadvertent choices, so it is an implicit reminder that seeking the director's original intention in any piece of art is a complete mirage. Detour is certainly not a good film, but it just might be a great one. (It is a short film too, and, out of copyright, it is available online for free.)

Safe (1995) Safe is a subtly disturbing film about an upper-middle-class housewife who begins to complain about vague symptoms of illness. Initially claiming that she doesn't feel right, Carol starts to have unexplained headaches, a dry cough and nosebleeds, and eventually begins to have trouble breathing. Carol's family doctor treats her concerns with little care, and suggests to her husband that she sees a psychiatrist. Yet Carol's episodes soon escalate. For example, as a 'homemaker' and with nothing else to occupy her, Carol's orders a new couch for a party. But when the store delivers the wrong one (although it is not altogether clear that they did), Carol has a near breakdown. Unsure where to turn, an 'allergist' tells Carol she has "Environmental Illness," and so Carol eventually checks herself into a new-age commune filled with alternative therapies. On the surface, Safe is thus a film about the increasing about of pesticides and chemicals in our lives, something that was clearly felt far more viscerally in the 1990s. But it is also a film about how lack of genuine healthcare for women must be seen as a critical factor in the rise of crank medicine. (Indeed, it made for something of an uncomfortable watch during the coronavirus lockdown.) More interestingly, however, Safe gently-yet-critically examines the psychosocial causes that may be aggravating Carol's illnesses, including her vacant marriage, her hollow friends and the 'empty calorie' stimulus of suburbia. None of this should be especially new to anyone: the gendered Victorian term 'hysterical' is often all but spoken throughout this film, and perhaps from the very invention of modern medicine, women's symptoms have often regularly minimised or outright dismissed. (Hilary Mantel's 2003 memoir, Giving Up the Ghost is especially harrowing on this.) As I opened this review, the film is subtle in its messaging. Just to take one example from many, the sound of the cars is always just a fraction too loud: there's a scene where a group is eating dinner with a road in the background, and the total effect can be seen as representing the toxic fumes of modernity invading our social lives and health. I won't spoiler the conclusion of this quietly devasting film, but don't expect a happy ending.

The Driver (1978) Critics grossly misunderstood The Driver when it was first released. They interpreted the cold and unemotional affect of the characters with the lack of developmental depth, instead of representing their dissociation from the society around them. This reading was encouraged by the fact that the principal actors aren't given real names and are instead known simply by their archetypes instead: 'The Driver', 'The Detective', 'The Player' and so on. This sort of quasi-Jungian erudition is common in many crime films today (Reservoir Dogs, Kill Bill, Layer Cake, Fight Club), so the critics' misconceptions were entirely reasonable in 1978. The plot of The Driver involves the eponymous Driver, a noted getaway driver for robberies in Los Angeles. His exceptional talent has far prevented him from being captured thus far, so the Detective attempts to catch the Driver by pardoning another gang if they help convict the Driver via a set-up robbery. To give himself an edge, however, The Driver seeks help from the femme fatale 'Player' in order to mislead the Detective. If this all sounds eerily familiar, you would not be far wrong. The film was essentially remade by Nicolas Winding Refn as Drive (2011) and in Edgar Wright's 2017 Baby Driver. Yet The Driver offers something that these neon-noir variants do not. In particular, the car chases around Los Angeles are some of the most captivating I've seen: they aren't thrilling in the sense of tyre squeals, explosions and flying boxes, but rather the vehicles come across like wild animals hunting one another. This feels especially so when the police are hunting The Driver, which feels less like a low-stakes game of cat and mouse than a pack of feral animals working together a gang who will tear apart their prey if they find him. In contrast to the undercar neon glow of the Fast & Furious franchise, the urban realism backdrop of the The Driver's LA metropolis contributes to a sincere feeling of artistic fidelity as well. To be sure, most of this is present in the truly-excellent Drive, where the chase scenes do really communicate a credible sense of stakes. But the substitution of The Driver's grit with Drive's soft neon tilts it slightly towards that common affliction of crime movies: style over substance. Nevertheless, I can highly recommend watching The Driver and Drive together, as it can tell you a lot about the disconnected socioeconomic practices of the 1980s compared to the 2010s. More than that, however, the pseudo-1980s synthwave soundtrack of Drive captures something crucial to analysing the world of today. In particular, these 'sounds from the past filtered through the present' bring to mind the increasing role of nostalgia for lost futures in the culture of today, where temporality and pop culture references are almost-exclusively citational and commemorational.

The Souvenir (2019) The ostensible outline of this quietly understated film follows a shy but ambitious film student who falls into an emotionally fraught relationship with a charismatic but untrustworthy older man. But that doesn't quite cover the plot at all, for not only is The Souvenir a film about a young artist who is inspired, derailed and ultimately strengthened by a toxic relationship, it is also partly a coming-of-age drama, a subtle portrait of class and, finally, a film about the making of a film. Still, one of the geniuses of this truly heartbreaking movie is that none of these many elements crowds out the other. It never, ever feels rushed. Indeed, there are many scenes where the camera simply 'sits there' and quietly observes what is going on. Other films might smother themselves through references to 18th-century oil paintings, but The Souvenir somehow evades this too. And there's a certain ring of credibility to the story as well, no doubt in part due to the fact it is based on director Joanna Hogg's own experiences at film school. A beautifully observed and multi-layered film; I'll be happy if the sequel is one-half as good.

The Wrestler (2008) Randy 'The Ram' Robinson is long past his prime, but he is still rarin' to go in the local pro-wrestling circuit. Yet after a brutal beating that seriously threatens his health, Randy hangs up his tights and pursues a serious relationship... and even tries to reconnect with his estranged daughter. But Randy can't resist the lure of the ring, and readies himself for a comeback. The stage is thus set for Darren Aronofsky's The Wrestler, which is essentially about what drives Randy back to the ring. To be sure, Randy derives much of his money from wrestling as well as his 'fitness', self-image, self-esteem and self-worth. Oh, it's no use insisting that wrestling is fake, for the sport is, needless to say, Randy's identity; it's not for nothing that this film is called The Wrestler. In a number of ways, The Sound of Metal (2019) is both a reaction to (and a quiet remake of) The Wrestler, if only because both movies utilise 'cool' professions to explore such questions of identity. But perhaps simply when The Wrestler was produced makes it the superior film. Indeed, the role of time feels very important for the Wrestler. In the first instance, time is clearly taking its toll on Randy's body, but I felt it more strongly in the sense this was very much a pre-2008 film, released on the cliff-edge of the global financial crisis, and the concomitant precarity of the 2010s. Indeed, it is curious to consider that you couldn't make The Wrestler today, although not because the relationship to work has changed in any fundamentalway. (Indeed, isn't it somewhat depressing the realise that, since the start of the pandemic and the 'work from home' trend to one side, we now require even more people to wreck their bodies and mental health to cover their bills?) No, what I mean to say here is that, post-2016, you cannot portray wrestling on-screen without, how can I put it, unwelcome connotations. All of which then reminds me of Minari's notorious red hat... But I digress. The Wrestler is a grittily stark darkly humorous look into the life of a desperate man and a sorrowful world, all through one tragic profession.

Thief (1981) Frank is an expert professional safecracker and specialises in high-profile diamond heists. He plans to use his ill-gotten gains to retire from crime and build a life for himself with a wife and kids, so he signs on with a top gangster for one last big score. This, of course, could be the plot to any number of heist movies, but Thief does something different. Similar to The Wrestler and The Driver (see above) and a number of other films that I watched this year, Thief seems to be saying about our relationship to work and family in modernity and postmodernity. Indeed, the 'heist film', we are told, is an understudied genre, but part of the pleasure of watching these films is said to arise from how they portray our desired relationship to work. In particular, Frank's desire to pull off that last big job feels less about the money it would bring him, but a displacement from (or proxy for) fulfilling some deep-down desire to have a family or indeed any relationship at all. Because in theory, of course, Frank could enter into a fulfilling long-term relationship right away, without stealing millions of dollars in diamonds... but that's kinda the entire point: Frank needing just one more theft is an excuse to not pursue a relationship and put it off indefinitely in favour of 'work'. (And being Federal crimes, it also means Frank cannot put down meaningful roots in a community.) All this is communicated extremely subtly in the justly-lauded lowkey diner scene, by far the best scene in the movie. The visual aesthetic of Thief is as if you set The Warriors (1979) in a similarly-filthy Chicago, with the Xenophon-inspired plot of The Warriors replaced with an almost deliberate lack of plot development... and the allure of The Warriors' fantastical criminal gangs (with their alluringly well-defined social identities) substituted by a bunch of amoral individuals with no solidarity beyond the immediate moment. A tale of our time, perhaps. I should warn you that the ending of Thief is famously weak, but this is a gritty, intelligent and strangely credible heist movie before you get there.

Uncut Gems (2019) The most exhausting film I've seen in years; the cinematic equivalent of four cups of double espresso, I didn't even bother even trying to sleep after downing Uncut Gems late one night. Directed by the two Safdie Brothers, it often felt like I was watching two films that had been made at the same time. (Or do I mean two films at 2X speed?) No, whatever clumsy metaphor you choose to adopt, the unavoidable effect of this film's finely-tuned chaos is an uncompromising and anxiety-inducing piece of cinema. The plot follows Howard as a man lost to his countless vices mostly gambling with a significant side hustle in adultery, but you get the distinct impression he would be happy with anything that will give him another high. A true junkie's junkie, you might say. You know right from the beginning it's going to end in some kind of disaster, the only question remaining is precisely how and what. Portrayed by an (almost unrecognisable) Adam Sandler, there's an uncanny sense of distance in the emotional chasm between 'Sandler-as-junkie' and 'Sandler-as-regular-star-of-goofy-comedies'. Yet instead of being distracting and reducing the film's affect, this possibly-deliberate intertextuality somehow adds to the masterfully-controlled mayhem. My heart races just at the memory. Oof.

Woman in the Dunes (1964) I ended up watching three films that feature sand this year: Denis Villeneuve's Dune (2021), Lawrence of Arabia (1962) and Woman in the Dunes. But it is this last 1964 film by Hiroshi Teshigahara that will stick in my mind in the years to come. Sure, there is none of the Medician intrigue of Dune or the Super Panavision-70 of Lawrence of Arabia (or its quasi-orientalist score, itself likely stolen from Anton Bruckner's 6th Symphony), but Woman in the Dunes doesn't have to assert its confidence so boldly, and it reveals the enormity of its plot slowly and deliberately instead. Woman in the Dunes never rushes to get to the film's central dilemma, and it uncovers its terror in little hints and insights, all whilst establishing the daily rhythm of life. Woman in the Dunes has something of the uncanny horror as Dogtooth (see above), as well as its broad range of potential interpretations. Both films permit a wide array of readings, without resorting to being deliberately obscurantist or being just plain random it is perhaps this reason why I enjoyed them so much. It is true that asking 'So what does the sand mean?' sounds tediously sophomoric shorn of any context, but it somehow applies to this thoughtfully self-contained piece of cinema.

A Quiet Place (2018) Although A Quiet Place was not actually one of the best films I saw this year, I'm including it here as it is certainly one of the better 'mainstream' Hollywood franchises I came across. Not only is the film very ably constructed and engages on a visceral level, I should point out that it is rare that I can empathise with the peril of conventional horror movies (and perhaps prefer to focus on its cultural and political aesthetics), but I did here. The conceit of this particular post-apocalyptic world is that a family is forced to live in almost complete silence while hiding from creatures that hunt by sound alone. Still, A Quiet Place engages on an intellectual level too, and this probably works in tandem with the pure 'horrorific' elements and make it stick into your mind. In particular, and to my mind at least, A Quiet Place a deeply American conservative film below the surface: it exalts the family structure and a certain kind of sacrifice for your family. (The music often had a passacaglia-like strain too, forming a tombeau for America.) Moreover, you survive in this dystopia by staying quiet that is to say, by staying stoic suggesting that in the wake of any conflict that might beset the world, the best thing to do is to keep quiet. Even communicating with your loved ones can be deadly to both of you, so not emote, acquiesce quietly to your fate, and don't, whatever you do, speak up. (Or join a union.) I could go on, but The Quiet Place is more than this. It's taut and brief, and despite cinema being an increasingly visual medium, it encourages its audience to develop a new relationship with sound.

| Publisher: | Penguin Books |

| Copyright: | 2018 |

| Printing: | 2019 |

| ISBN: | 0-525-55880-2 |

| Format: | Kindle |

| Pages: | 615 |